A Hacker's Guide to Post-Quantum Cryptography: ML-KEM & FIPS 203

This is Part Two of the Hacker's Guide to Cryptography series. If terms like "group", "field", or "modular arithmetic" feel unfamiliar, go read it first. It'll take 20 minutes and you'll thank yourself.

The Quiet Apocalypse

You've heard the buzzword. Quantum computing. Maybe it sounds like science fiction, or marketing. But here's the uncomfortable truth the security industry is quietly sprinting away from:

Not "theoretically, maybe, one day." NIST, the US standards body, has already called it. In 2022, they began standardising the replacements. In 2024, they published the final standards. The migration is happening right now.

The algorithm we're dissecting today, ML-KEM, standardised as FIPS 203, is one of those replacements. It's already shipping in Chrome, AWS, Cloudflare, and the Linux kernel.

So. What is it, and why does the math actually work?

First: What Problem Are We Solving?

Before we touch a single equation, let's be clear about what kind of algorithm ML-KEM is.

You know two flavors of cryptography:

- Symmetric encryption (AES, ChaCha20): blazing fast, but both sides need the same secret key. How do you share that key securely in the first place?

- Asymmetric encryption (RSA): solves the sharing problem, but slow, and, critically, broken by quantum computers.

The bridge between them is a Key Encapsulation Mechanism, or KEM.

A KEM does exactly one job:

Alice generates a fresh random symmetric key $K$. She encapsulates it using Bob's public key. Bob decapsulates it using his private key. Both now share $K$, without ever transmitting $K$ over the wire. Then they use $K$ for fast symmetric encryption.

- Bob hands Alice a locked box with his open padlock on it (public key)

- Alice puts a randomly generated secret note ($K$) inside and snaps the padlock shut

- Only Bob can open the box with his key (private key) and read the note

- Now both Alice and Bob know $K$, and nobody who intercepted the box learned anything

That's a KEM. ML-KEM is a KEM that's secure against quantum computers.

Why Is RSA Broken by Quantum?

RSA's security rests on one single fact: factoring large numbers is hard. Given $n = p \times q$ where $p$ and $q$ are huge primes, you can't find $p$ and $q$ efficiently.

In 1994, Peter Shor proved that a quantum computer can factor numbers in polynomial time. That's not "faster" in the casual sense, it's an entirely different complexity class. RSA's hard problem evaporates.

The same goes for Elliptic Curve cryptography (ECDH, ECDSA). Shor's algorithm kills it too.

Yes, I know folks: there is no quantum computer today that can run Shor's algorithm at cryptographically relevant scale. Not even close. The largest factored number via quantum methods is laughably small compared to a 2048-bit RSA key.

But that's precisely the point. Intelligence agencies and well-resourced adversaries don't need to break your encryption today. They just need to store it. Traffic intercepted in 2025, decrypted in 2035, that's the Harvest Now, Decrypt Later threat model, and it's not theoretical paranoia.

It's the reason NIST started the PQC standardisation process in 2016, a full decade before anyone expects a cryptographically capable quantum computer to exist.

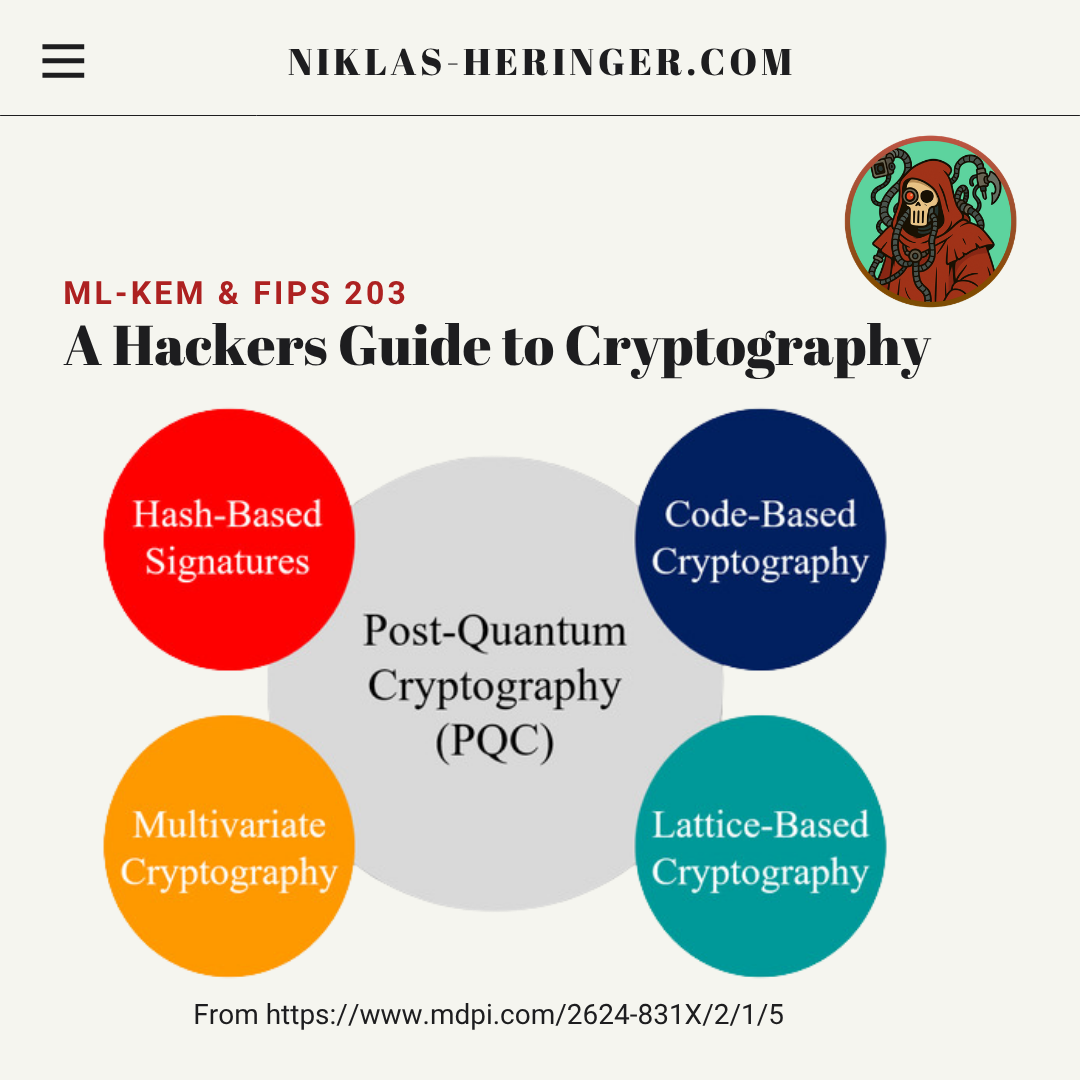

So what math doesn't quantum computers destroy? Lattices.

The New Hard Problem: Hiding in a Lattice

Interactive 2D lattice on dark background demonstrating the Closest Vector Problem. Drag the red query point q to see which lattice point is nearest.

Drag q; the dashed ring radius equals the distance to the nearest lattice point

Trivial in 2D · Exponentially hard in 768 dimensions · That hardness secures ML-KEM

A lattice is just a grid of points, but in very high dimensions. Think of the integer coordinate points in 2D space: $(0,0), (1,0), (0,1), (1,1) \ldots$ That's a simple 2D lattice. Now imagine that, but in 768 dimensions.

The hard problem on lattices is called the Closest Vector Problem (CVP):

Given a lattice and a point that's near (but not on) the lattice, find the nearest lattice point.

In 2D, you can see the answer. In 768 dimensions, even the fastest classical and quantum computers fail to solve this efficiently.

No known quantum algorithm gives a meaningful speedup against lattice problems. That's why we're here.

The Math Foundation

Alright, let's build from the ground up. If you came from Part One, you remember groups and fields. We're going to build something new on top of that: a polynomial ring.

Polynomials: Nothing New

You know polynomials from school:

$$f(x) = 3x^3 + x^2 - 5x + 2$$

Just a sum of $x$-powers with coefficients. Nothing scary.

Now, two things:

First: What if the coefficients aren't regular integers, but integers modulo some prime $q$? Remember from Part One: modular arithmetic wraps numbers around like a clock. We pick $q = 3329$ for ML-KEM. Every coefficient lives in ${0, 1, 2, \ldots, 3328}$.

Why $q = 3329$? It's prime (which guarantees nice algebraic properties) and specifically chosen so that the Number Theoretic Transform, the fast multiplication algorithm, works efficiently at this size. It's not random, it's precisely engineered.

Second: What if we cap the degree of our polynomial at 255? Polynomials of degree 256 and above get reduced using the special polynomial $X^{256} + 1$.

When you divide any polynomial by $X^{256} + 1$, you keep the remainder, just like mod. The result always has degree at most 255, so exactly 256 coefficients.These two constraints together define our polynomial ring:

$$R_q = \mathbb{Z}_q[X] / (X^{256} + 1)$$

Every element of $R_q$ looks like:

$$a_0 + a_1 X + a_2 X^2 + \ldots + a_{255} X^{255}$$

where every $a_i \in {0, 1, \ldots, 3328}$.

You can add and multiply these polynomials; addition is coefficient-wise mod $q$, multiplication is polynomial multiplication followed by reduction mod $(X^{256}+1)$ and mod $q$.

Why does crypto care about $R_q$?

Each polynomial is a container for 256 numbers. Operations on polynomials are parallelisable and map beautifully to hardware (NTT, more on that in a moment). This is how we get cryptography that's both mathematically hard to break and fast enough to run on embedded devices.

Modules: Vectors of Polynomials

In ML-KEM we don't work with individual polynomials. We work with vectors and matrices whose entries are polynomials from $R_q$.

A vector $\mathbf{s} \in R_q^k$ looks like:

$$\mathbf{s} = \begin{pmatrix} f_1 \ f_2 \ \vdots \ f_k \end{pmatrix}$$

where each $f_i$ is a full polynomial with 256 coefficients. One such vector at $k = 3$ is secretly $3 \times 256 = 768$ numbers packed into a neat algebraic structure.

A matrix $\mathbf{A} \in R_q^{k \times k}$ is $k^2$ such polynomials, at $k = 3$, that's $9 \times 256 = 2304$ numbers. Enormous. But generated from a tiny 32-byte seed (more on that later).

The Hard Problem: Module-LWE

Here's where the lattice hardness sneaks in. Module Learning With Errors (MLWE) is the core assumption that makes ML-KEM secure.

It works like this.

Take a random matrix $\mathbf{A} \in R_q^{k \times k}$. Take a short secret vector $\mathbf{s} \in R_q^k$ (short = small coefficients). Add a tiny random error vector $\mathbf{e} \in R_q^k$ (also short):

$$\mathbf{t} = \mathbf{A} \cdot \mathbf{s} + \mathbf{e}$$

The MLWE problem: given $(\mathbf{A}, \mathbf{t})$, find $\mathbf{s}$.

Interactive MLWE diagram. Amber dot shows lattice point As. Drag the red t point to add error e and see the CVP problem appear.

η = 0 · t lies exactly on the lattice — solvable with Gaussian elimination

Drag the red t away from As to add error e

If there were no error $\mathbf{e}$, you could solve $\mathbf{t} = \mathbf{A} \cdot \mathbf{s}$ with linear algebra (Gaussian elimination). Easy.

The error $\mathbf{e}$ pushes $\mathbf{t}$ slightly off the lattice. Now it's the Closest Vector Problem. No efficient algorithm exists, classical or quantum, for finding $\mathbf{s}$ given only $(\mathbf{A}, \mathbf{t})$.

What does "short" mean?

The secret and error vectors are sampled from a Centered Binomial Distribution $\beta_\eta$. Concretely: you flip $2\eta$ coins, count the heads, subtract $\eta$. The result is always in ${-\eta, \ldots, +\eta}$, clustered around 0.

For ML-KEM-768: $\eta_1 = \eta_2 = 2$, so coefficients in ${-2, -1, 0, 1, 2}$.

Tiny numbers. But they make the math irreversible.

The Three Operations of ML-KEM

A KEM has three algorithms. Let's build them.

KeyGen: Setting Up the Locked Box

Bob runs KeyGen once. He generates:

- A public key (the open padlock, shareable with anyone)

- A private key (the key only he has)

Step 1: Generate the matrix $\mathbf{A}$

Instead of storing a $k \times k$ matrix of polynomials, which would be megabytes, Bob stores a 32-byte random seed $\rho$.

$$\mathbf{A} \leftarrow \text{SeedExpandA}(\rho)$$

Both parties can regenerate $\mathbf{A}$ deterministically from $\rho$ using SHAKE-128, an Extendable Output Function (XOF); a hash function that produces arbitrarily long output. Feed it $\rho$, get as many pseudo-random bytes as you need to fill all the polynomial coefficients.

XOF is just a hash function you can squeeze output from indefinitely. SHA-3 and SHAKE are the two XOFs used throughout ML-KEM.

Step 2: Sample small secret and error vectors

$$\mathbf{s}, \mathbf{e} \xleftarrow{$} \beta_\eta^k$$

These are sampled using another 32-byte seed $\sigma$, again via SHAKE-256. Deterministic, reproducible, but cryptographically indistinguishable from random if you don't know $\sigma$.

Step 3: Compute the public key

$$\mathbf{t} = \mathbf{A} \cdot \mathbf{s} + \mathbf{e}$$

This is the MLWE instance. $\mathbf{A}$ and $\mathbf{t}$ go into the public key. $\mathbf{s}$ goes into the private key. $\mathbf{e}$ gets thrown away; it's baked into $\mathbf{t}$ and nobody needs it again.

Public key: $(\rho, \mathbf{t})$; just the seed and the MLWE output.

Private key: $\mathbf{s}$; the short secret vector.

ML-KEM KeyGen flowchart: two seeds rho and sigma feed separate pipelines that converge to compute t = As + e, which then splits into the public key and private key.

e is discarded after KeyGen, it's baked into t and never needed again

Encapsulation: Alice Locks the Box

Alice has Bob's public key $(\rho, \mathbf{t})$. She wants to generate a random key $K$ and send it to Bob without anyone intercepting it.

Step 1: Regenerate $\mathbf{A}$

Alice runs the same SeedExpandA$(\rho)$. She gets the identical $\mathbf{A}$ Bob used. No secret knowledge needed, $\rho$ is public.

Step 2: Sample fresh small vectors

$$

\mathbf{r}, \mathbf{e}_1 \xleftarrow{$} \beta \eta^k, \quad e_2 \xleftarrow{$} \beta \eta

$$

These are Alice's ephemeral randomness. One vector $\mathbf{r}$, another vector $\mathbf{e}_1$, and a scalar polynomial $e_2$.

Step 3: Pick a random message

$$

m \xleftarrow{$} {0,1}^{256}

$$

This is 256 bits of randomness that will become the shared key $K$. Note: $m$ itself is not $K$, it's the seed. $K = H(m)$ at the end.

Step 4: Build the ciphertext

$$\mathbf{u} = \mathbf{A}^T \cdot \mathbf{r} + \mathbf{e}_1$$

$$v = \mathbf{t}^T \cdot \mathbf{r} + e_2 + \left\lfloor \frac{q}{2} \right\rceil \cdot m$$

The ciphertext is $(\mathbf{u}, v)$.

Let's unpack $v$ intuitively. The term $\lfloor q/2 \rceil \cdot m$ encodes each bit of $m$ into a polynomial coefficient: a $0$ bit becomes $0$, a $1$ bit becomes $\lfloor 3329/2 \rceil = 1665$, roughly the "midpoint" of $\mathbb{Z}_q$. Then $\mathbf{t}^T \cdot \mathbf{r} + e_2$ adds noise on top. It looks like random garbage, unless you have $\mathbf{s}$.

Step 5: Derive the shared key

$$K = H(m)$$

Alice now holds $K$. She sends $(\mathbf{u}, v)$ to Bob.

Interactive MLWE diagram. Amber dot shows lattice point As. Drag the red t point to add error e and see the CVP problem appear.

η = 0 · t lies exactly on the lattice — solvable with Gaussian elimination

Drag the red t away from As to add error e

Decapsulation: Bob Opens the Box

Bob receives $(\mathbf{u}, v)$ and has his private key $\mathbf{s}$.

Step 1: Compute the "noisy $m$"

$$v - \mathbf{s}^T \cdot \mathbf{u}$$

Let's expand this fully. Substituting the definitions of $v$ and $\mathbf{u}$:

$$= \mathbf{t}^T \mathbf{r} + e_2 + \lfloor q/2 \rceil m - \mathbf{s}^T(\mathbf{A}^T \mathbf{r} + \mathbf{e}_1)$$

$$= (\mathbf{A}\mathbf{s} + \mathbf{e})^T \mathbf{r} + e_2 + \lfloor q/2 \rceil m - \mathbf{s}^T \mathbf{A}^T \mathbf{r} - \mathbf{s}^T \mathbf{e}_1$$

The $\mathbf{s}^T \mathbf{A}^T \mathbf{r}$ terms cancel (since $(\mathbf{As})^T\mathbf{r} = \mathbf{s}^T\mathbf{A}^T\mathbf{r}$), leaving:

$$= \overbrace{\mathbf{e}^T \mathbf{r} + e_2 - \mathbf{s}^T \mathbf{e}_1}^{\text{small noise}} + \left\lfloor \frac{q}{2} \right\rceil \cdot m$$

Step 2: Round to recover $m$

Each coefficient of the result is either:

- Close to $0$ (if the original bit was $0$)

- Close to $1665$ (if the original bit was $1$)

Round to the nearest: below $q/4$ → bit $0$, above $q/4$ → bit $1$. You recover $m$ exactly.

Step 3: Derive $K$

$$K = H(m)$$

Bob now holds the same $K$ as Alice. The key exchange is complete, $K$ was never transmitted.

Interactive error floor: number line from 0 to q showing bit-0 and bit-1 decoding zones. Drag slider to increase noise and see when decryption fails.

η = 55 · zones stay separate (limit: q/4 = 832) — rounding recovers m exactly

ML-KEM actual noise: η₁ = η₂ = 2, total error ≈ 4–8 — far below the limit

Why does crypto care about this noise structure?

Without noise, $\mathbf{t} = \mathbf{A}\mathbf{s}$ is solvable by linear algebra. With noise, it's the lattice Closest Vector Problem. But the noise must be small enough that decapsulation still works, hence the carefully chosen parameters.

This tradeoff between security (noise must exist) and correctness (noise must not dominate) is the central engineering challenge of lattice cryptography.

The Invisible Upgrade: CPA → CCA2

What we've described so far is technically KYBER-PKE: a Public Key Encryption scheme that's IND-CPA secure.

CPA = Chosen Plaintext Attack. An attacker can choose plaintexts and see their encryptions. A CPA-secure scheme hides the plaintext.

But we need more. We need IND-CCA2: security against a Chosen Ciphertext Attack. An attacker can also submit ciphertexts of their choice and see the decryptions. This is a much stronger model, and it's the real-world threat for KEMs.

Without CCA2 security, an attacker could:

- Intercept a ciphertext $(\mathbf{u}, v)$

- Tweak it slightly → $(\mathbf{u}', v')$

- Submit to Bob's decapsulator and observe the output

- Use the difference to learn bits of $\mathbf{s}$

This is a decryption oracle attack, and KYBER-PKE is vulnerable to it.

The Fujisaki-Okamoto Transform

The fix is the Fujisaki-Okamoto (FO) Transform. It's applied once, transforming the CPA scheme into a CCA2-secure KEM. ML-KEM = KYBER-PKE + FO.

The trick has three parts:

1. Encaps is made deterministic

Instead of sampling $m$ truly randomly, it's derived from a hash of the public key and a random seed:

$$m = H(\text{seed}, H(\text{pk}))$$

The encapsulation randomness $\mathbf{r}$ is then derived deterministically from $m$:

$$\mathbf{r} = G(m)$$

This means: for a given $m$, there's exactly one valid ciphertext. Any modification to $(\mathbf{u}, v)$ produces a ciphertext that corresponds to no valid $m$.

2. Decaps verifies the ciphertext by re-encrypting

After recovering $m'$ from the ciphertext, Decaps doesn't just output $H(m')$. It:

- Re-derives $\mathbf{r}' = G(m')$

- Re-runs Encaps with this $\mathbf{r}'$ to get $(\mathbf{u}^, v^)$

- Checks: is $(\mathbf{u}^, v^) \stackrel{?}{=} (\mathbf{u}, v)$?

If they match: the ciphertext is valid. Output $K = H(m')$.

3. Implicit rejection for invalid ciphertexts

If they don't match: instead of returning an error (which leaks information), Decaps outputs a pseudorandom value derived from a secret rejection key $z$ baked into the private key:

$$K = H(z, (\mathbf{u}, v))$$

This is called implicit rejection and it's what elevates ML-KEM from a toy to a real-world-deployable primitive.

FO-Transform flow diagram: two paths from decapsulation — valid ciphertext returns K = H(m prime), invalid returns K = H(z, ciphertext). Both look identical to an attacker.

Why does crypto care about implicit rejection?

"Return an error on bad input" sounds harmless. It's not. Differences in error behaviour, even timing differences of nanoseconds, can leak secret key bits to a patient attacker. Implicit rejection makes every decapsulation call look identical from the outside, regardless of whether the ciphertext was valid or forged. No oracle, no leak.

The Circle Closes

Here's the full picture side by side:

| Encaps (Alice) | Decaps (Bob) | |

|---|---|---|

| Input | Bob's public key $(\rho, \mathbf{t})$, random $m$ | Private key $\mathbf{s}$, ciphertext $(\mathbf{u}, v)$ |

| Key knowledge | Only public info | Knows secret $\mathbf{s}$ |

| Core computation | $\mathbf{u} = \mathbf{A}^T\mathbf{r} + \mathbf{e}_1$, $v = \mathbf{t}^T\mathbf{r} + e_2 + \lfloor q/2\rceil m$ | $v - \mathbf{s}^T\mathbf{u}$ → round → $m'$ |

| Verification | : | Re-encrypt with $m'$, compare ciphertext |

| Output | $(\mathbf{u}, v)$ + shared key $K = H(m)$ | Shared key $K = H(m')$ or rejection |

Alice builds the noise structure around $m$. Bob tears it apart using $\mathbf{s}$, the only thing that makes the noise terms cancel. Nobody else can do what Bob does, because nobody else knows $\mathbf{s}$, and finding $\mathbf{s}$ from $\mathbf{t}$ is the lattice hard problem.

Parameters

ML-KEM comes in three variants. The parameter $k$ controls the module dimension; bigger $k$, bigger lattice, more security.

| Variant | $k$ | $\eta_1$ | $\eta_2$ | Security level | Public key | Ciphertext |

|---|---|---|---|---|---|---|

| ML-KEM-512 | 2 | 3 | 2 | ~128 bit (AES-128) | 800 B | 768 B |

| ML-KEM-768 | 3 | 2 | 2 | ~192 bit (AES-192) | 1184 B | 1088 B |

| ML-KEM-1024 | 4 | 2 | 2 | ~256 bit (AES-256) | 1568 B | 1568 B |

For context: an RSA-2048 public key is 256 bytes, and its ciphertext is 256 bytes, but it's broken by quantum. An ML-KEM-768 public key is 1184 bytes, about 4.6× larger, and survives quantum attacks. That's the price of the upgrade.

In practice, the extra bytes are irrelevant. A TLS handshake already moves kilobytes. An extra kilobyte of post-quantum public key doesn't move the needle.

One Fast Aside: NTT

You might wonder: multiplying polynomials with 256 coefficients, done millions of times per second in a TLS handshake, isn't that slow?

It would be, naively. Polynomial multiplication is $O(n^2)$; quadratic in the number of coefficients.

ML-KEM uses the Number Theoretic Transform (NTT), the integer analog of the Fast Fourier Transform. It reduces polynomial multiplication to $O(n \log n)$. At $n = 256$, that's the difference between 65,536 operations and roughly 2,048. The specific choice of $q = 3329$ was made precisely because it supports an efficient NTT at this size.

This is why ML-KEM is practical on microcontrollers. An ARM Cortex-M4 can do ML-KEM-768 key generation in under 1 millisecond.

What ML-KEM Is Not

Before you leave, let's clear up a common confusion.

ML-KEM is not general-purpose encryption. You cannot use it to encrypt an arbitrary message. It encapsulates exactly one random key $K$. Use $K$ with AES-GCM or ChaCha20-Poly1305 for the actual payload.

ML-KEM is not a signature scheme. For signatures (authentication, integrity), you want ML-DSA (FIPS 204). The math is similar, same rings, same MLWE foundation, but the construction is completely different, built on the Fiat-Shamir paradigm. That's a story for another post.

ML-KEM is not a silver bullet. It protects the key exchange. If your implementation leaks timing information, or if you reuse keys incorrectly, or if you deploy it against a side-channel attack, the math doesn't save you. The security proof is in the ROM (Random Oracle Model), your hash functions need to be good, your RNG needs to be good, your comparison needs to be constant-time.

Wrapping Up

Here's what you now understand that most engineers working with TLS don't:

- Why RSA and ECDH die against quantum computers (Shor's algorithm)

- What a lattice is and why the Closest Vector Problem is hard

- How polynomial rings pack 256 numbers into a single algebraic object

- How MLWE hides a secret inside an apparently random linear equation

- How KeyGen, Encaps, and Decaps compose into a working KEM

- Why the noise term cancels for the legitimate receiver but not for the attacker

- How the Fujisaki-Okamoto transform upgrades CPA security to CCA2

- What implicit rejection is and why error oracles are dangerous

The next post in this series will be ML-DSA, the signature half of the NIST PQC suite. If you've followed this one, you already know the rings. The new ideas are commitment schemes, Fiat-Shamir, rejection sampling, and why signing needs a fundamentally different structure than key encapsulation.

Grab a coffee. The math gets weirder and cooler.

Questions, corrections, abuse? Drop them in the comments or find me on GitHub.

No spam, no sharing to third party. Only you and me.

Member discussion